Traditional surveys have become the most common way companies gather feedback on consumer attitudes, opinions and sentiments, but this approach typically delivers a subpar experience to customers. Clinical and impersonal at best, traditional surveys are hurting the market research industry’s reputation among consumers. It’s no wonder survey response rates continue to drop.

Complicating the problem is the fact that emails have fallen out of favor. Most people, especially younger ones, are rarely on emails outside of work. On the other hand, messaging platforms, SMS and other mobile- first channels have increased in popularity.

At Rival Technologies, we are inspired to use modern technology to face these challenges head on. One of the ways we do this is to completely re-imagine the survey experience as a conversation or a chat.

Chats (or "conversational surveys" or "chat surveys") are surveys hosted through messaging apps (like Facebook Messenger) or web browsers. Unlike traditional surveys, chats are conversational and are therefore more friendly, informal and shorter.

Chats (or "conversational surveys" or "chat surveys") are surveys hosted through messaging apps (like Facebook Messenger) or web browsers. Unlike traditional surveys, chats are conversational and are therefore more friendly, informal and shorter.

Through a mix of texts, buttons 🔘 , images 📸, audio files 🎧 and video 📹 , chats provide a more fun way for respondents to share their feedback. Chats rival traditional surveys by providing researchers and marketers a way to seamlessly reach respondents through apps they use frequently.

Chats are:

☑️ Mobile-first. They aren’t simply online surveys resized for mobile devices. Chats are a complete reimagining of the survey experience optimized for mobile.

☑️ Persistent and timely. Since chats are pushed through messaging apps rather than emails, people are more likely to respond. You can time deployment to get feedback while respondents are “in the moment.” 👌

☑️ Conversational. Chats leverage the same channels that people use to talk to their friends and family every day. The experience feels more like a conversation rather than a run-of-the-mill survey.

☑️ A source of richer insights. Open-ended feedback can be requested as text, image, video, or audio—giving your insights more context and color.

That said, whenever you innovate in the research space, you can’t just be new for the sake of being cute. You have to make sure you’re developing a legitimate tool.

RELATED RESOURCE:

🔘 Conversational surveys: What are chats and how are they different from traditional online surveys? 👈

At Rival, we’re both researchers and technologists, so while we know that chats are a promising way of engaging with consumers, we also wanted to understand their impact both on the research experience and the quality of data companies get from this new way of gathering feedback and insight.

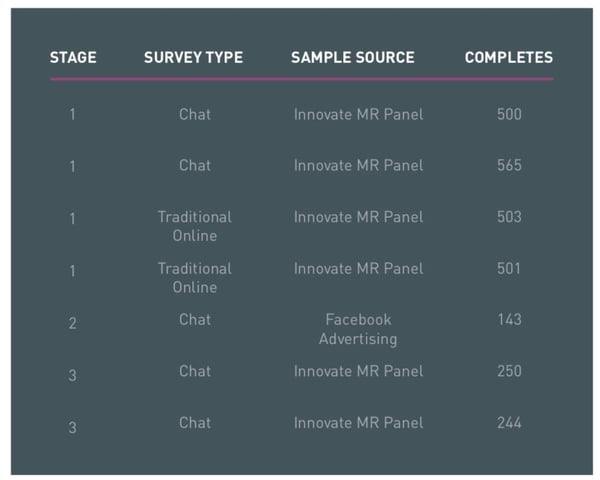

To compare the chat experience versus the traditional survey experience, we did a multi-stage project in July 2018. To compare apples to apples, we used the same set of questions for both chats and traditional surveys—the topic was people’s attitudes on and usage of sunscreen. We chose this theme as it was relevant to most people; it was also a topic that a company might explore in a real study.

-1.webp?width=549&name=Rival%20Tech%20-%20Chat_Surveys_Social_Book%20(1)-1.webp)

Prefer to read this report in PDF form? 👉 Click here.

For recruitment, we leveraged InnovateMR’s panel and river sample. We also recruited respondents through Facebook ads. Both the chat and the survey started with questions about sunscreen, but we also asked questions about people’s survey experience at the end.

In this report, we will focus less on the insights we got about sunscreen usage and more on the implications of using chats as a survey methodology. Our goal is to explore the following topics:

🔘 Respondent experience:

How do respondents feel about chats as a new way of participating in research?

🔘 Demographics:

Is there a difference (from a demographics perspective) between people who answer chat surveys versus those who answer more traditional online surveys? And if so, what do those differences mean for researchers and the quality of insights they can expect from chats?

🔘 Result comparison:

How similar or different are the answers given by people who took chats vs those who took traditional surveys?

More than ever, the insights industry needs to prioritize the experience people have when they participate in research. The quality of customer feedback—and the speed at which those insights are received—very much depend on whether research participants find the experience enjoyable.

Simply put, a great survey experience results to a positive brand or category experience and encourages customers to come back and continue to provide their honest feedback. The experience needs to be pleasant, fun or interesting. If the experience is tedious, boring or repetitive, there’s high likelihood that they’ll put off answering surveys or avoid it altogether. If your company consistently provides a good experience, you’ll have a more engaged community of customers, and you can get feedback from a significant number of people more quickly.

People like the experience with chats more than they do with traditional online surveys.

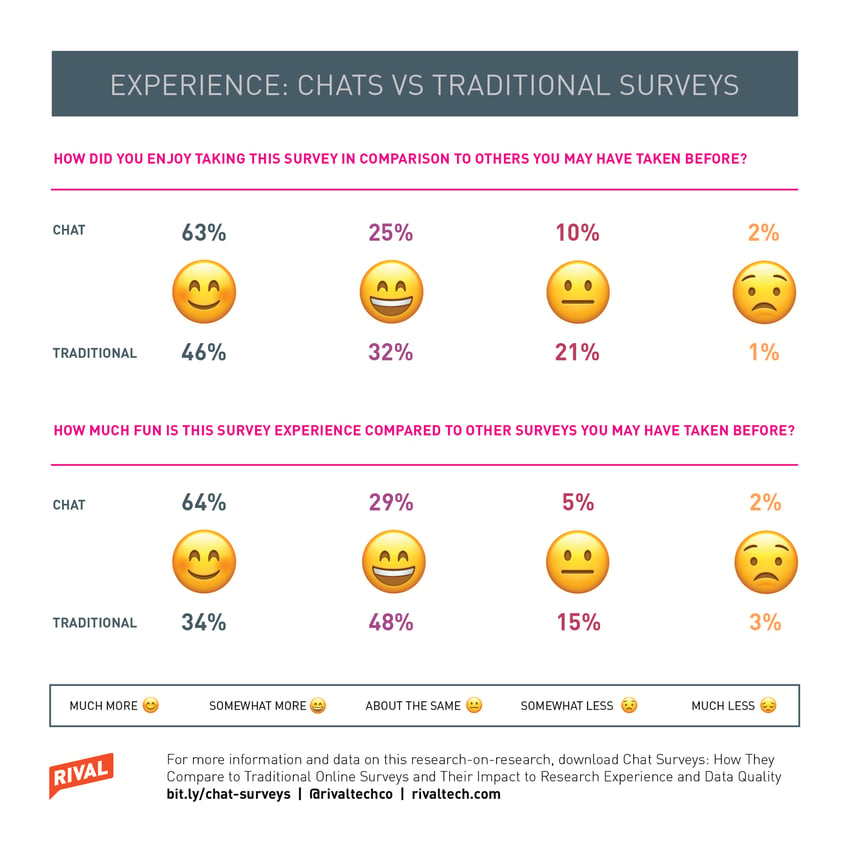

For this study, we asked respondents about four different aspects of their experience: enjoyment, fun, ease and length.

An overwhelming 88% of participants told us they found the chat experience either “much more” or “somewhat more” enjoyable than other surveys they’ve taken in the past.

Respondents also feel chats are more fun than traditional surveys. Sixty-four percent said the experience was “very fun”; in contrast, only 34% of people who did the more traditional online survey agreed to the same statement.

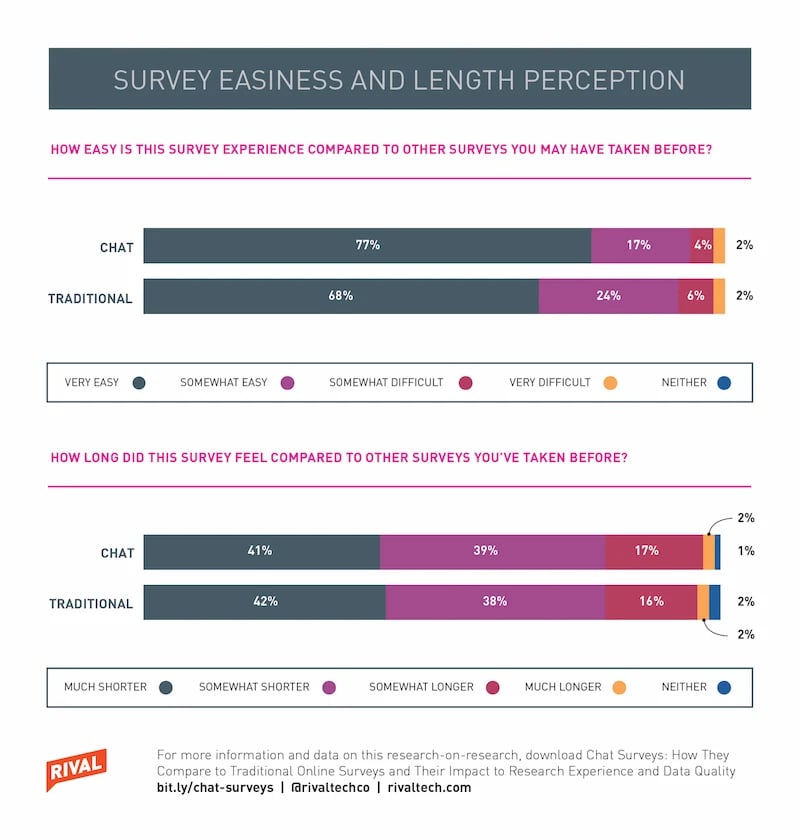

Ease is interesting given that chats are very new. The chat interface and experience are familiar to respondents since chats use the same channels people use to message their friends and family. But as a channel for sending out surveys, chats are new. Therefore, we were curious if research participants found the new experience difficult. The data shows chats are seen as at least as easy or slightly easier to complete as a traditional survey.

Survey length is an important part of the experience because if the activity is too long, it could result to frustration on the part of the participants. Our data shows people who took the traditional survey and those who did the chats had similar perception of the length of the activities. This isn’t surprising since we used the same number of questions for both activities. It is, however, worth noting that for the traditional survey, we asked fewer questions than most surveys would.

Many people said the compared to traditional surveys, chats felt more like a real conversation.

Our study clearly shows people prefer chats over traditional surveys, but the question is why? The responses we got from our open-ended questions help answer this question. We asked, “do you have any general feedback on the survey experience today?” and got a lot of comments about the survey being the “best ever.” Many people said the compared to traditional surveys, chats felt more like a real conversation.

.webp?width=740&name=Rival%20Tech%20-%20Rival_Graphs4%20(1).webp)

While we are pleased to see that people love chats, our researcher hat encouraged us to more closely examine if chats introduced any demographic skews. Since chats are very new, some researchers want to know, rightfully so, about the demographic composition of the people who take chats.

Chats lend themselves well to distribution via SMS and messaging platforms—channels that are very popular with younger consumers. So while it makes logical sense that a younger demographic would find chats appealing, some researchers might wonder if this new way of engaging consumers would alienate other demographics.

.webp?width=572&name=Rival%20tech%20-Rival_Graphs3%20(1).webp)

Our research shows the demographics of the people who take chats are not significantly different from those who take traditional surveys. In other words, no skew was introduced into the data as a result of sending panel sample to a chat as opposed to a traditional survey.

We don’t see any real significant differences between the two groups in terms of age distribution. There is a small increase among younger (under 44) consumers in the chat data but nothing that would suggest that an older generation is put off by the new survey method. Area where people lived (rural, suburban and urban), education level and race distribution are very similar between the two groups.

No skew was introduced into the data as a result of sending panel sample to a chat as opposed to a traditional survey.

Device distribution is also very close between the two group; the most common device used was laptop, followed closely by smartphone. This isn’t surprising—we assumed people who take traditional surveys are already predisposed to using their computers to answer surveys, especially when invited through email.

To learn 8 tips for creating chats that maximize your response rates and deliver the best experience to research participants, click here.

To learn 8 tips for creating chats that maximize your response rates and deliver the best experience to research participants, click here.

The lack of demographic skew is great news. But what if we want to alter the demographic somewhat? Current sample sources, like panels, face the challenge of recruiting and retaining a younger demographic. As an industry, we have trained people to take surveys on computers, as opposed to mobile devices. What if we want to tap into people who already have their smartphones in their hand?

Results from this study do not really tell us anything about that question, given the sample source used for the study. We plan on doing more research-on-research studies in the future to examine how to change the demographic distribution of sample for the better. As a sneak peek, we used the same chat as above and simply bought some ads on Facebook (with no specific demographic targeting) and found:

- A significant bump in the 25 to 34 year-old age group (27% for the Facebook sample versus 21% for chats and 17% for traditional surveys)

- A huge jump in mobile-device usage (77% mobile device usage as opposed to about 33% from the panel sample)

- No significant difference in area (rural vs urban), ethnicity or education

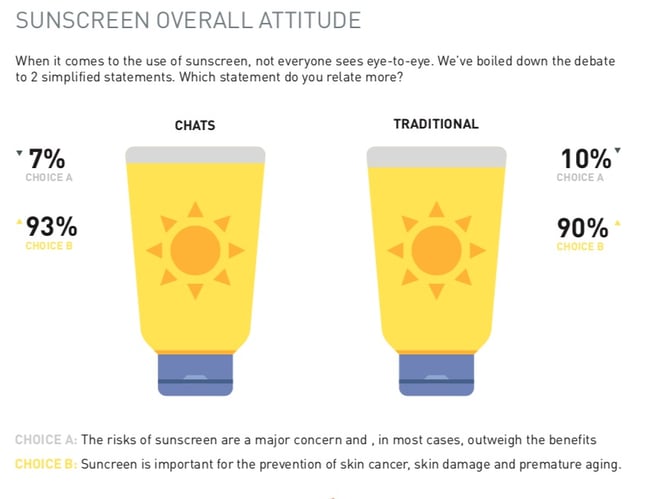

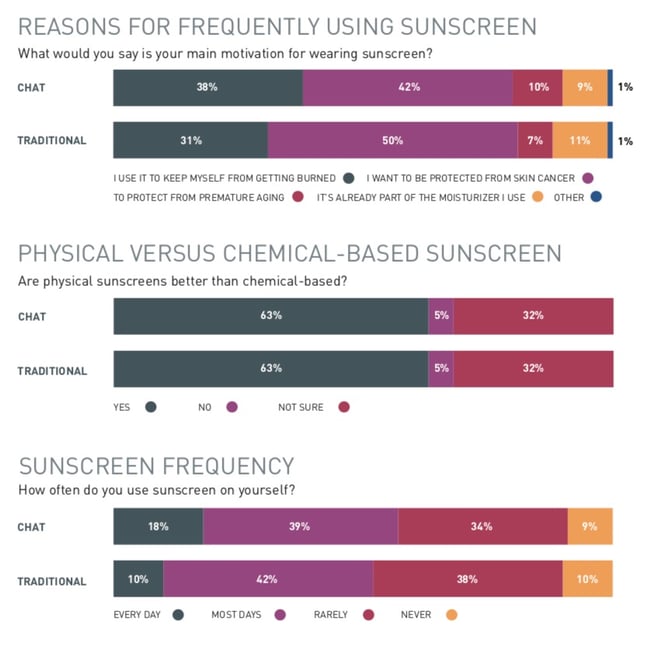

So, you might be wondering about the actual results of our chats and surveys. (As a reminder, our questions were about sunscreen usage and attitudes.) Did the “chat people” have a different view from those who took the traditional survey?

Not really. The results from the two groups were actually very similar. The same conclusions can be drawn from the two data sets.

This isn’t super surprising. After all, research participants for this study came from the same sample and, as we discussed earlier, had similar demographic composition. The chat itself does not introduce any skew when questions are the same and the sample source is the same.

This isn’t super surprising. After all, research participants for this study came from the same sample and, as we discussed earlier, had similar demographic composition. The chat itself does not introduce any skew when questions are the same and the sample source is the same.

Our research on chat surveys provides compelling evidence they are a very promising way of engaging with consumers for insights. Chats provide a more fun, more enjoyable and easier experience to respondents without changing the demographic composition of the people you’re talking to.

At the same time, results from a chat we sent exclusively through Facebook suggests chats could open the doors to reaching a significant but often ignored group in research: mobile-first consumers who are highly unlikely to participate when invited via email.

At Rival Technologies, our intention is to continue to innovate in this emerging space and ensure that companies can use the data they get from chats with a high degree of confidence. More importantly, we are committed to ensuring that the experience for respondents remains seamless and enjoyable.

If you'd like to be notified of new content about chat surveys, please subscribe to our blog below.

A series of online surveys and chat surveys were sent to a sample of 2563 respondents from the InnovateMR panel and river sources in July 2018. One chat was sent to 143 respondents recruited exclusively via Facebook Messenger. Respondents were asked about their attitudes and opinions toward sunscreen use, as well as their experience answering the chat or survey.

Jennifer Reid is the Senior Methodologist at Rival Technologies. A pioneer in online research methodologies, Jennifer started her career at Angus Reid Group in 1998, where she was instrumental in building Canada’s first online research panel.

Jennifer Reid is the Senior Methodologist at Rival Technologies. A pioneer in online research methodologies, Jennifer started her career at Angus Reid Group in 1998, where she was instrumental in building Canada’s first online research panel.

In 2003, Jennifer joined Vision Critical. As the company’s Executive Vice President of Corporate Strategy, she helped develop the methodology for Vision Critical’s proprietary community offering—an innovation that went on to disrupt the research industry in the next decade.

At Rival, Jennifer is once again helping shape the future of insights by leading the charge in the development of chats and other conversational research technologies.

A proud mother of three, Jennifer has a degree in economics from the University of British Columbia. She sits on the board of the Angus Reid Forum and St. Mark College, an affiliate of UBC.